This posting was prompted by a question on one of the BA forums on Linked In. The original question was:

I gave an answer in the forum, but here is an expansion, and some ponderings.

First of all, let’s set out the terms of reference. The question asks about a “large unfamiliar” database. I think we can assume that “unfamiliar” is “one that we haven’t encountered before”, but what is “LARGE”? To me “large” could be:

- Lots of tables

- Many terror-bytes 😉

- Lots of transactions

- Lots of users

- There may be other interpretations

I’m going to go with “Lots of tables” with the definition or “lots of” being:

“more than I can conveniently hold in my head at one time”

I’ve also assumed that we are working with a “transactional database” rather than a “data warehouse”.

Preparation

Gilian, the questioner was given some good suggestions, which I summarised as “Collecting Information” or perhaps “Preparation”:

- Understand objectives of “The Business”

- Understand the objectives of “This Project” (Digging into the Database)

- Collect relevant Organisation charts and find out who is responsible for doing what

- Collect relevant Process Models for the business processes which use the database

- Get hold of, generate, or otherwise create a data model (Entity Relationship Diagram or similar)

Of these, the one which is specific to working with a Database is the ERD. Having a diagram is an enormous help in visualising how the bits of the database interact.

Chunking

For me, the next step is to divide the model into “chunks” containing groups of entities (or tables). This allows you to:

- Focus – on one chunk

- Prioritise – one chunk is more important, interesting or will be done before, another

- Estimate – chunks are different sizes

- Delegate – you do that chunk, I’ll do this one

- And generally “Manage” the work; do whatever are the project objectives.

I would use several techniques to divide the database or model up into chunks. These techniques work equally well with logical and physical data models. It can be quite a lot of work if you have a large model. None of the techniques are particularly complicated, but they are a little tricky to explain in words.

Here is a list of techniques:

- Layering

- Group around Focal Entities

- Process Impact Groups

- Realigning

Organise the Data Model

I cannot over-emphasis how important it is to have a well-laid out diagram. Some tools do it well, some do it less well. My preference is to have “independent things” at the top.

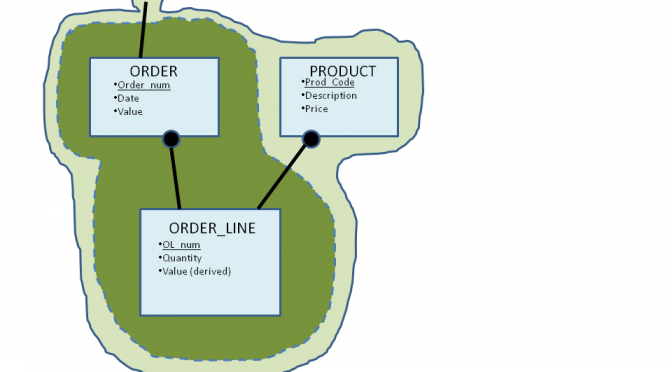

I’ve invented a business.

- We take ORDERs from CUSTOMERs.

- Each ORDER consists of one or more ORDER_LINES and each line is for a PRODUCT.

- We Deliver what the customer wants as DELIVERY CONSIGNMENTS.

- Each CONSIGNMENT contains one or more Batches of product (I’ve haven’t got a snappy name for that).

- We know where to take the consignment by magic, because we don’t have an Address for the Customer!

- We reconcile quantities delivered against quantities ordered, because we sometimes have to split an order across several deliveries.

- That’s it!

Layering

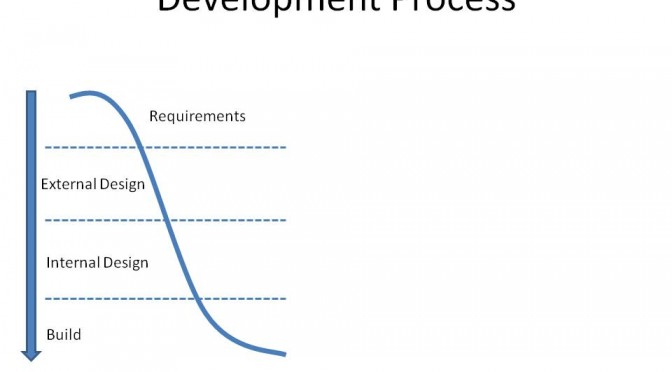

“Layering” involves classifying the entities or groups of entities as being about:

- Classifications

- Things

- Transactions

- Reconciliations

Things

Let’s start with “Things”. Things are can be concrete or they can be abstract. We usually record a “Thing” because it is useful in doing our business. Examples of Things are:

- People

- Organisations

- Products

- Places

- Organisation Units (within our organisation, or somebody elses)

Classifications

Every business has endless ways of classifying “Things” or organising them into hierarchies. I just think of them as fancy attributes of the “Things” unless I’m studying them in their own right.

Note: “Transactions” can have classifications too (in fact almost anything can and does), I’ve just omitted them from the diagram!

Note: The same structure of “Classification” can apply to more than one thing. This makes sense if, for example, the classification is a hierarchy of “geographic area”. Put it in an arbitrary place, note that it belongs in other places as well, and move on!

Transactions

Transactions are what the business is really interested in. They are often the focus of Business Processes.

Where there are parts of Transactions (eg Order_Line) keep the child with the parent.

Reconciliations

Reconciliations” (between Transactions) occur when something is “checked against something else”. In this case we are recording that “6 widgits have been ordered” and that “3 (or 6) have been delivered”.

If you use these “layers”, arranged as in the diagram, you will very likely find that the “One-to-manys” point from the top (one) down (many) the page.

Groups around Focal Entities

To do this, pick an entity which is a “Thing” or a “Transaction” then bring together the entities which describe it, or give more detail about it. Draw a line round it, give it a name, even if only in your head!

- “Customer and associated classifications” and

- “Order and Order_line” are candidate groups.

Process Impact Groups

To create a “Process Impact Group”

- Select a business process

- Draw lines around the entities which it: creates, updates and refers to as part of doing its work.

- You should get a sort of contour map on the data model.

In my example the processes are:

- Place Order

- Assemble Delivery Consignment

- Confirm Delivery (has taken place)

It is normal for there to be similarities between “Process Impact Groups” and “Focal Entity Groups”. In fact, it would be unusual if there were not similarities!

Realigning

Try moving parts under headers (so, Order_line under Order) and reconciliations under the transaction which causes them. In the diagram, I’ve moved “Delivered Order Line” under “Delivery”, because it’s created by “Delivery related processes” rather than when the Order is created.

Finally, “Chunking”

Based on the insights you have gained from the above, draw a boundary around your “chunks”.

The various techniques are mutually supportive, not mutually exclusive. The chunks are of arbitrary size. If it is useful, you can:

- combine neighbouring chunks together or

- you can use the techniques (especially “Focal entities” and “Process Entity Groups”) to break them down until you reach a single table/entity.

Tools

My preferred tools for doing this are: a quiet conference room, late at night; the largest whiteboard I can find; lots of sticky Post-its or file cards (several colours); a pack of whiteboard pens; black coffee on tap and the prospect of beer when I’ve finished. For a large database (several hundred tables) it can take several days!

Once you’ve done all this, then all you have to do, is do the work!

I hope you have as much fun doing it as I had writing about it! J